For the longest time, I’ve used Livejournal for my blog but recently thought it might be good to do something different with my blog as I felt mine was looking a bit dated. There are currently a number of different options for those who want to blog their thoughts and ideas to the world such as Blogger, Type Pad, and Word Press. Those are all good options with various features depending on what one is looking for and technical level. For me, I wanted something that allowed me to build my site, to as much a degree as possible, tools that I use for my daily job. One of my favorite tools and the tool I use more than anything is the Vim editor. Secondly, I use Git and specifically for publishing git-managed projects, Github.

You see, I don’t really like most Graphical UIs. I find them cumbersome and that they often just get in the way of wanting to do simple things. Also, since I work so much on automating so many different things, I’m just innately predisposed to doing work on the command line. Hence, I gravitate to tools that suit my modus operandi.

After speaking with my friend Brian Aker, he mentioned to me this project called Jekyll. When he told me I could simply use Git and Vim to edit and manage it, that was all I needed to hear.

How to build your site with Jekyll

I assumed I wouldn’t even try to migrate my old blog to the new site, which is sufficient for me in that I can simply refer people to Livejournal to see old posts, however Jekyll offers jekyll-import as a means to import pages from Drupal, Wordpress, Google Reader, Joomla, etc. (though not Livejournal… yet).

First steps

This post is intended to keep it very simple but get you up and running. You are welcome to review the more detailed features of Jekyll on their site.

Prerequisites

Install Jekyll

First, you obviously have to install Jekyll. You will want in advance to make sure to set up RVM. Install the gem:

gem install jekyll <br /> ### Create a post

Create a markdown page with the contents you want. You must create a page named with a timestamp YYYY-MM-DD-my-post.markdown. Example:

vi _posts/2014-03-21-my-post.markdown <br /> ### Serve up the page locally

Jekyll comes with the ability to serve up the page locally with a lightweight development web-server to view your changes.

jekyll serve

And then access the page

http://host:4000

I run everything in a vmware instance, so of course the host value is the IP address of my virtual machine.

At this point, it would be necessary to have the generated site be publicly available. Jekyll powers Github Pages, so the next steps pertain how to set up both Github Pages and DNS.

Setting up Github Pages

The following section I wanted to describe the process for setting up Github Pages. The documentation on the site is excellent, but there are some things that I thought describing would help.

Create a Github Pages repository

You will need to create a Github Pages repository. The instructions on how to do this are very simple per the documentation.

- I followed the directions for

Project siteon the site though you may need an organizational site. - I selected

Start from scratch

I created a repository called patg.net.

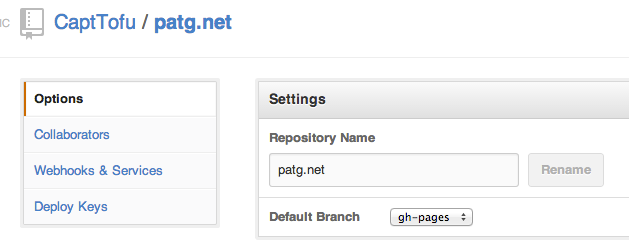

Set the default branch in the repository to gh-pages

As the header suggests, you will want to set the default branch in your repository (using regular Github)

Set up your repository

Inside of your site’s directory, git initialize it

$ git init

$ git add .

Then add the remote to the Github Pages repository. In my case, I ran the following:

git remote add origin https://github.com/CaptTofu/patg.net.git <br /> ### Commit the changes

Add the post and commit (if you are adding a new post, not needed due to previous step)

$ git add _posts/2013-03-21-new-post.markdown <br /> ### Push the changes

Push to the repository, once you are certain that’s what you wish to publish after viewing locally with the development web-server. Push to the gh-pages branch:

$ git push origin gh-pages <br /> ### View your changes on your site!

Initially, if you don’t have DNS set up, you will view your site on Github Pages as something like:

http://capttofu.github.io/patg.net

If you are going to keep it this way, you will need to set _config.yml to have the following:

baseurl: /

If you switch DNS to this being a primary, non-sub-domain site, this line can be removed.

Setting up DNS

Depending on your provider, you will need to set up DNS to either use a CNAME to point to the new blog, or as in my case, I wanted this to be http://patg.net and not a subdomain site, so I had to create two A-records to:

- 192.30.252.153

- 192.30.252.154

Add a CNAME file

The directions state you will need a file called CNAME, which simply contains:

patg.net

At this point your site should be fully functioning!

Other features and enhancements

There are a number of other things to add to a Jekyll site. This section covers those which my site needed.

Comments

What about comments? Well, for me, I decided to use Disqus. All I had to simply do was to add the div tag that Disqus provides you from their site to the file _layouts/post.html:

<div class="post">

<h2>Ansible provisioning of a Galera Cluster (Percona XtraDB Cluster)</h2>

Building a MySQL Galera cluster with Docker and Ansible

Galera replication has been very useful for having a relatively simple to set up and manage a MySQL HA database backend for various platform services at HP. Asynchronous replication is fine, it’s just that for complex cloud systems, a simple solution is desired for replication and asynchornous replication would require PRM (corosync/pacemaker). Over the past two years, I’ve worked with both SaltStack and Chef for provisioning galera clusters. Each had its challenge but they both had some things in common, particularly the issue having to do with bootstrapping the first node and subsequently bring up the other nodes, each with a successively augmented list of member nodes (other than ones self) for wsrep_cluster_address, and then finally updating the first bootstrap node to have a properl list versus gcomm://.

There have been a few projects I’ve been analyzing since working for HP ATG (Advanced Technology Group). Two of those have been Docker and the other Ansible. I did some automation work with each when I first looked into both of them and have been meaning to go back and clean up that work and make it something more modular and easier to share with those in the community who need to be able to use both for a number of things. What I had basically was a Ansible playbook that built a Percona XtraDB Cluster (Galera) Node Docker Image. I realized it was novel, but for better re-usability, I decided to make it possible for the playbook to be used in any setup, not just Docker.

Initially, I thought this task would be simple. But one should always know when you are dealing with provisioning tools and setup, that there will be continual problems to solve. I liken waiting for a provisioning run to complete being worse than watching paint dry: because at least with painting, you paint the surface and it simply dries (unless you are in Singapore on a humid day!). With provisioning, you often have to wait for it to fail. It would be like watching paint dry and having to paint it all over again because it was the wrong color once dried.

The Ansible learning-curve wasn’t too difficult since like [SaltStack][salt], it uses YAML for its “playbooks” (Salt “states”) and jinja for its templates. Ansible is different from Salt in that you run a playbook which when executed, uses SSH to connect to the node and execute it is managing whereas Salt uses a master-minion setup.

Additionally, I have been familiarizing myself with Docker. What is Docker? It’s a way to create lightweight, portable, self-sufficient containers from any application. Or as in the words of one of their developers “awsome-sauce on top of lxc”. More or less, it’s great functionality built on top of lxc.

Note: I will cover Docker specifically, and in more detail, in a later post.

The container I use for this post is mostly bare-bones though with ssh installed and set up so that when you launch the container, you can ssh into it and run ansible against it.

How it works

The setup is relativly straightforward. I have on repo that I use to launch the containers and build a hosts inventory file for Ansible. The playbooks are both a cluster playbook as well as a haproxy playbook. They in turn use the ansible-galera and ansible-galera-haproxy roles.

The real functionality is in the ansible-galera role. This took a bit of work to get right. First off, you have to make sure to get the order correct when launching the three containers and having the first one bootstrap correctly. Also, upstart doesn’t work correctly with Docker, so I ended up using the init script /etc/init.d/mysql. A docker container needs an entrypoint. An entrypoint is what the container executes upon launching. For that, I created a script that launches:

- mysql

- pyclustercheck

- sshd

The reason I use pyclustercheck is because xinetd doesn’t work correctly on the Docker container. It runs, and the mysqlchk executes but there is nothing returned when you telnet port 9200. Pyclustercheck essentially replaces the command line script used by xinetd, clustercheck and has a webserver implemented so that there is neither a need for clustercheck nor xinetd.

How it works

The core functionality for this playbook is how it determines if a node is the first node and if it should be set to bootstrap (wsrep_cluster_address=gcomm://). Much of the functionality, I simply lifted and modified from the work I had done in a Salt state for Galera.

The first thing to look at, and this is crucial, is tasks/install_galera.yml in the ansible-galera repo. Lines 14-16 set a variable bootstrap_check to “bootstrap” if mysql has not been installed and “installed” if it has. This needs to be known in order to know if mysql (PXC) is being installed for the first time and that bootstrapping the node would be the correct thing to do.

Next, observe the template in the ansible-galera repo. and templates/etc/mysql/my.cnf.j2, lines 54-73.

On line 55, an list is defined for containing members of the cluster. Line 58 a simple boolean for to be used to determine if the cluster node is being bootstrapped. On lines 61 through 63, bootstrap_cluster is set to 1 if the node being provisioned is the first in the list and bootstrap_check.stdout subscript 0 is set to “bootstrap”, then that means the node being provisioned needs to be the bootstrap node.

Lines 65 through 71 add the IP address of a node from the hosts defined in the ansible hosts inventory file under [galera_cluster] if the IP address is not the IP address of the node being provisioned (bug in Ubuntu).

Line 72 joins the hosts together so that there is a line such as wsrep_cluster_address=gcomm://172.0.17.1,172.0.17.2....

Finally, in tasks/configure_galera.yml, where the my.cnf is written out with the correct cluster address that the playbook worked so hard at creating is interpolated and the mysql is restarted.

There are other functionalities in the code that I won’t go into detail about can be purused in the ansible-galera repo that are straighforward.

Steps to create a cluster

Requirements:

There are 4 repositories in question:

- http://github.com/CaptTofu/docker-galera.git - scripts for building the hosts file and launching/deleting containers used in testing this

- http://github.com/CaptTofu/cluster-install.git - the top-level playbook that uses both the ansible-galera and ansible-galera-haproxy roles refered to here

- http://github.com/CaptTofu/ansible-galera.git - the role for setting up the Galera cluster using PXC. Installed via ansible-galaxy

- http://github.com/CaptTofu/ansible-galera-haproxy.git - the role for setting up the haproxy setup that would utilize this cluster, installed by ansible-galaxy

Check out the docker-galera and cluster-install repositories

Check out the docker-galera and cluster-install repositories (the first two listed above). The poster of this blog uses ~/code, however, wherever the preference of where work is done

Build the docker image for the Galera cluster

Enter the directory for docker-galera repo and build the image:

host:~/code/docker-galera$ docker build .

Uploading context 174.1 kB

Uploading context

Step 1 : FROM ubuntu:13.04

---> eb601b8965b8

Step 2 : MAINTAINER Patrick aka CaptTofu Galbraith , patg@patg.net

---> Using cache

---> 6cc8cbe1a0db

Step 3 : RUN apt-get update && apt-get upgrade -y && apt-get clean

---> Using cache

---> f74e32f52dee

<snip>

Step 16 : ENTRYPOINT ["/usr/local/sbin/start_services.sh"]

---> Running in a1fbf763c77f

---> 5d9fadfacecf

Successfully built 5d9fadfacecf

Make a note of the image ID. In this example, it would be 5d9fadfacecf

Install the ansible-galera and ansible-galera-haproxy roles

Using ansible-galaxy, install both the ansible-galera and ansible-galera-haproxy roles

$ ansible-galaxy install --force --roles-path=/where/ever/you/want/your/roles CaptTofu.ansible-galera

$ ansible-galaxy install --force --roles-path=/where/ever/you/want/your/roles CaptTofu.ansible-galera

Launch the docker containers

Enter the directory containing the cluster-install repository and run docker-launch-nodes.sh and using the sole argument the image ID recorded above when the image was built:

~/code/cluster-install$ ../docker-galera/docker-launch-nodes.sh 5d9fadfacecf

At this point, there should be 4 running containers – 3 for PXC (your Galera cluster) and 1 for haproxy (this would be essentially any application that needs to connect to the cluster) and for this exercise, a hosts file that ansible will use.

Verify that these containers are indeed running. An example:

host:~/code/docker-galera$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

0636ea03d91e a1fbf763c77f /usr/local/sbin/star 3 hours ago Up 3 hours 22/tcp, 3306/tcp, 4444/tcp, 4567/tcp, 4568/tcp, 9200/tcp galera_node3

46c4b4a2c8a8 a1fbf763c77f /usr/local/sbin/star 3 hours ago Up 3 hours 22/tcp, 3306/tcp, 4444/tcp, 4567/tcp, 4568/tcp, 9200/tcp galera_node2

4b5fac0b202a a1fbf763c77f /usr/local/sbin/star 3 hours ago Up 3 hours 22/tcp, 3306/tcp, 4444/tcp, 4567/tcp, 4568/tcp, 9200/tcp galera_node1

35a653c02872 a1fbf763c77f /usr/local/sbin/sshd 2 days ago Up 2 days 22/tcp haproxy

Run the cluster playbook

Now you can run the playbook (still from within the cluster-install repo directory):

~/code/cluster-install$ ansible-playbook -i hosts -u root cluster.yml

At this point, you will have a running cluster

Run the haproxy playbook

~/code/cluster-install$ ansible-playbook -i hosts -u root haproxy.yml

At this point, there will be a proxy node that is connected to the cluster!

Use the cluster

Determine the IP address of the HAProxy container:

~/code/cluster-install$ docker inspect haproxy|grep -i ipadd

"IPAddress": "172.17.0.125"

SSH into the HAProxy container:

# mysql -u docker -pdocker -h 127.0.0.1

root@35a653c02872:/var/log# mysql -u docker -pdocker -h 127.0.0.1

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 11

Server version: 5.6.15-63.0-log Percona XtraDB Cluster (GPL), Release 25.4, wsrep_25.4.r4043

Copyright (c) 2000, 2013, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql>

And now, you are in business!

Conclusion

This cluster setup is an example of the great things one can do with Ansible and Docker and I intend on improving the playbook and setup. Some of which include:

- Cleanup!

- Better setup with how to have an SSH key that can be used in the repo

- Even better algorithm with boostrapping

- Find ansible and docker gurus to help give me critiques to the work

The one thing I took away from building this was how much faster it is to deploy containers than virtual machine instances. This has profound implications for software packaging and testing.

Credits

- Patrick Galbraith aka “CaptTofu” (HP ATG)

- David Busby (Percona)

<script type="text/javascript">

/* * * CONFIGURATION VARIABLES: EDIT BEFORE PASTING INTO YOUR WEBPAGE * * */

var disqus_shortname = 'patgnet'; // required: replace example with your forum shortname

/* * * DON'T EDIT BELOW THIS LINE * * */

(function() {

var dsq = document.createElement('script'); dsq.type = 'text/javascript'; dsq.async = true;

dsq.src = '//' + disqus_shortname + '.disqus.com/embed.js';

(document.getElementsByTagName('head')[0] || document.getElementsByTagName('body')[0]).appendChild(dsq);

})();

</script>

<noscript>Please enable JavaScript to view the <a href="http://disqus.com/?ref_noscript">comments powered by Disqus.</a></noscript>

<a href="http://disqus.com" class="dsq-brlink">comments powered by <span class="logo-disqus">Disqus</span></a>

</div>

<div id="disqus_thread"></div>

<script type="text/javascript">

/* * * CONFIGURATION VARIABLES: EDIT BEFORE PASTING INTO YOUR WEBPAGE * * */

var disqus_shortname = 'patgnet';

... SNIP ... <br /> ### RSS Feeds

I wanted my site to be able to be picked up by a number of “Planet” sites. For this, I needed RSS. This is very easy to add. Simply run the following in the directory containg your site repository:

$ git clone https://github.com/snaptortoise/jekyll-rss-feeds

$ cp jekyll-rss-feeds/feed.xml patg.net/

$ cd patg.net

$ git add feed.xml

$ git push origin gh-pages

That’s all!

RedCarpet

In order to have Redcarpet, the markdown library that Github Pages uses which offers features stock markdown doesn’t, you can install this module into the _plugins directory simply by doing the following:

$ cd patg.net/_plugins

$ wget https://github.com/nono/Jekyll-plugins/blob/master/redcarpet2_markdown.rb

$ git add redcarpet2_markdown.rb

Add it to _config.yml:

markdown: redcarpet

redcarpet:

extensions: ["no_intra_emphasis", "fenced_code_blocks", "autolink", "strikethrough", "superscript"]

You will also need to install the gem albino for it to work:

gem install albino <br />

Summary

With this post, you should have my example of how I set up my site as a good start for yourself. Mileage may vary, but I do think this should help, along with the various documentation on both Jekyll’s and Github Pages websites to get your own site set up.

As I mentioned before, there are far many more options and useful bits of information I could have delved into that even I haven’t fully utilized and if I feel inspired upon learning more, you can be certain to look here on my site about those realizations!